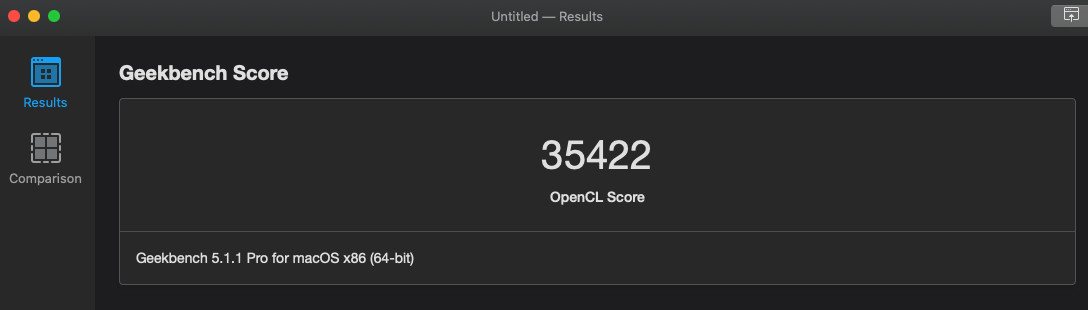

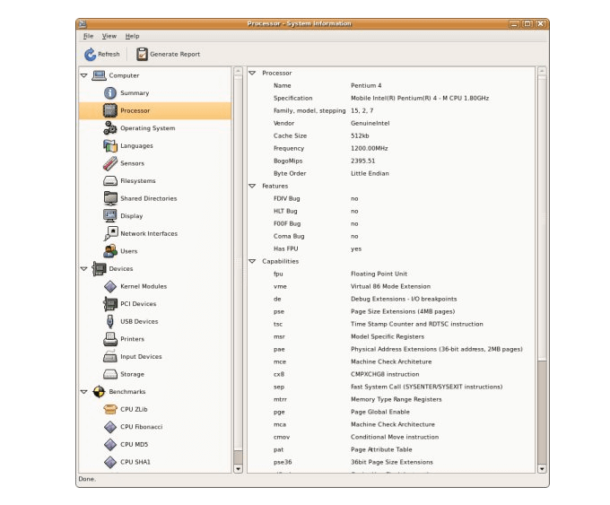

OpenCL is the primary language used to run graphics processing on AMD GPUs. Related content: read our in-depth guide about CUDA on NVIDIAĪMD creates Radeon GPUs for embedded solutions and mobile systems, laptops and desktops, and Radeon Instinct GPUs for servers. This wide range of NVIDIA hardware can be used both with CUDA and OpenCL, but the performance of CUDA on NVIDIA is higher, because it was designed with NVIDIA hardware in mind. NVIDIA provides comprehensive computing and processing solutions for mobile graphics processors (Tegra), laptop GPUs (GeForce GT), desktops GPUs (GeForce GTX), and GPU servers (Quadro and Tesla). NVIDIA currently dominates the market, holding the largest share. There are three major manufacturers of graphic accelerators: NVIDIA, AMD and Intel. Running CUDA and OpenCL at Scale with Run:AIĬUDA vs OpenCL: What’s the Difference? Hardware.OpenCL is not just for GPUs (like CUDA) but also for CPUs, FPGAs… In addition, OpenCL was developed by multiple companies, as opposed to NVIDIA’s CUDA. OpenCL programs are designed to be compiled at run time, so applications that use OpenCL can be ported between different host devices. You can also use the API to manage device memory separately from host memory. It provides an API that enables programs running on a host to load the OpenCL kernel on computing devices. OpenCL uses a programming language similar to C. OpenCL has dramatically improved the speed and flexibility of applications in various market categories, including professional development tools, scientific and medical software, imaging, education, and deep learning. OpenCL is used to accelerate supercomputers, cloud servers, PCs, mobile devices, and embedded platforms. Open Computing Language (OpenCL) serves as an independent, open standard for cross-platform parallel programming. Related content: read our guide to NVIDIA CUDA What is OpenCL? With CUDA programming, developers can use the power of GPUs to parallelize calculations and speed up processing-intensive applications.įor GPU-accelerated applications, the sequential parts of the workload run single-threaded on the machine’s CPU, and the compute-intensive parts run in parallel on thousands of GPU cores.ĭevelopers can use CUDA to write programs in popular languages (C, C++, Fortran, Python, MATLAB, etc.) and add parallelism to their code with a few basic keywords.

CUDA serves as a platform for parallel computing, as well as a programming model.ĬUDA was developed by NVIDIA for general-purpose computing on NVIDIA’s graphics processing unit (GPU) hardware.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed